Intersection

- Connecting the digital and physical worlds

- Connecting cities and citizens

- Connecting residents and neighborhoods

- Connecting airports and travelers

- Connecting people and information

- Connecting public transit and commuters

- Connecting brands and consumers

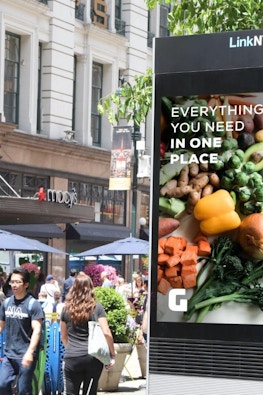

Intersection is an experience-driven OOH media & technology company that delivers programming, consumer amenities, and advertising to cities.

Partners

Technology and media solutions for cities, transit systems, air travel, destinations, and other public spaces

Our SolutionsAdvertisers

Out-of-home advertising for national brands, local brands, and advertising agencies

Media Offerings

In The News

All NewsEsther Raphael on Personalizing Out of Home Content and Ads

October 10 · Billboard Insider™

Esther Raphael on Creating Good Out of Home Content

October 9 · Billboard Insider™

DOOH Advertising Blossoms, Fueled by Smart Cities: How to Cash in

September 25 · Digital Signage Today